Slopwatch: linuxsecurity.com and Other 'Linux' Sites With LLM Slop

SEO spam with machine-generated fodder, plus a person to whom English isn't a first language

We've not really had to do a "Slopwatch" lately; the good news is, we just didn't stumble upon much slop, at least not easily detectable slop. Slop or LLM slop was explained by Richard Stallman (RMS) recently in this new interview. Dr. Stallman said: "The model is large. It's not that the language is large. Yes, the English language is very large. Italian is pretty large too. The point is, it's not the language that is large. It's the model that is large. The point is that when people hear about these large language models and they suppose that they are intelligent and then they see text generated by one, they believe it. They assume that this program understands the text that it generated, but they don't ever understand. If you assume that it understands, then you say, "how did it make this stupid mistake". Because they make mistakes all the time. They make obvious mistakes rather often but even worse is when they make unobvious mistakes, like the lawyer who asked one of these chatbots, "give me a list of pertinent legal decisions, that are pertinent to deciding this case". And the chatbot invented plausible looking references to non-existent cases. And the lawyer said, "ah this is an artificial intelligence, it must know". So he put those in his brief, in his filing, and he was laughed at by the judge once it was discovered that those cases did not exist. Those cases were fictitious because they sounded right. All that an LLM knows how to do is make text that sounds plausible. Whether it's true or not, that's something beyond the understanding of a language model. They're not designed to do that. They have no idea of semantics."

Yet now there are many fake 'articles' out there about "Linux". They barely make sense and they contain falsehoods or security FUD. This site is a repeat offender. This is its latest:

Nope, it's not a real article:

This one is not, either: (different 'author')

100% LLM slop:

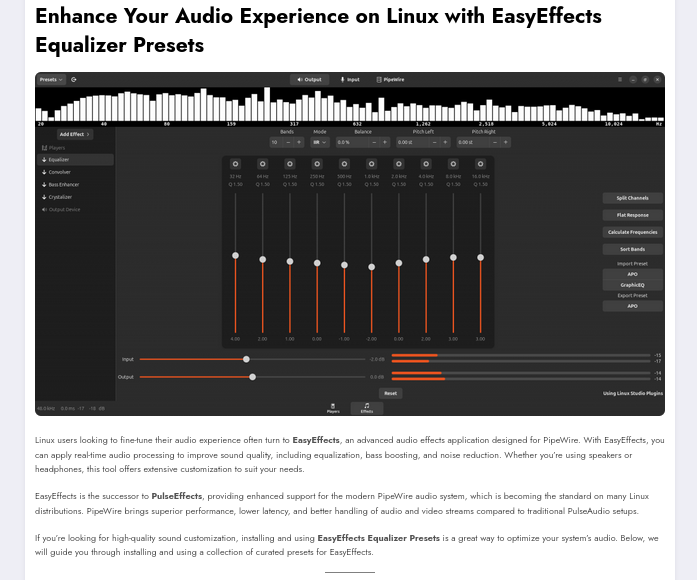

Here's another site, syndicated by Planet Ubuntu this week:

100% LLM slop, but its author is Malaysian, so maybe he tries to compensate for weaker English:

LLM slop will always be less accurate than something actually written in sloppy English by a professional. Yes, LLMs might get the grammar right, but they have no grasp of what's true and what's fiction. Don't judge books by their cover. Good grammar might as well be 'cover-up' for a lack of merit/quality.

In short, many English language articles on the Web can now be treated with suspicion. So we've generally lost a lot. █