Marcia Having Fun With the Most Sought-After Distro of GNU/Linux, According to DistroWatch

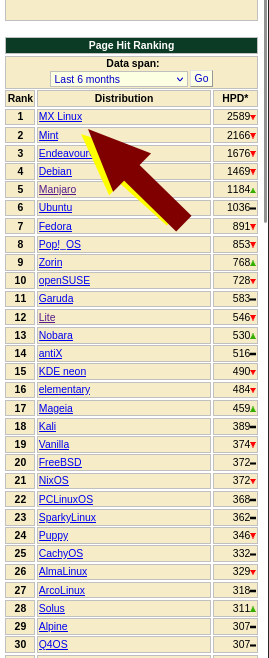

Yes, it's MXLinux, the distribution (distro) that was top of the table for a very long time, based on pageviews:

LAST time we mentioned MXLinux was probably years ago. It is quite unique, not a mere clone. Not a case of "throw in some wallpapers, choose a GTK/Qt theme, add a new logo"...

Less than 10 days ago the Debian issue was noted (we covered it 4 days earlier): "Debian’s 6.1.0-16 kernel with the fixes to both the ext4 corruption problem from 6.1.0-14 AND the cfg80211 modules problems is rolling out into the debian mirrors. MX users can feel free to use the new kernel. Many thanks to the debian kernel team for addressing these issues quickly. Mistakes happen, and addressing them quickly is a very good thing."

MXLinux is known to many people because of its stance on systemd, where mistakes happen a lot. To quote their site:

Because the use of systemd as a system and service manager has been controversial, we want to be clear about its function in MX Linux. Systemd is included by default but not enabled. You can scan your MX system and discover files bearing systemd* names, but those simply provide a compatibility hook/entrypoint when needed.

MX Linux uses systemd-shim, which emulates the systemd functions that are required to run the helpers without actually using the init service. This means that SvsVinit remains the default init yet MX Linux can use crucial Debian packages that have systemd dependencies such as CUPS and Network Manager. This approach also allows the user to retain the ability to choose his/her preferred init on the boot screen (GRUB). For details, see the MX/antiX Wiki.

Our reader Marcia Wilbur has been having lots of fun with Debian derivatives, including the default system for the Raspberry Pi. Days ago she told us she had put MXLinux on a Pi: "My interest was in writing documentation/instructions for the pi so Jerry posted the alpha release - I wasn't expecting anyone to contribute, but wow, the community responded. I thought that was just *my* test version. So, I'm working on ux/writing for the @latest MXLinux for Pi."

She has been working on other things too. To quote her: "Local Text Generator with WebUI: I have a working text generator locally with WebUI - loads LLM on MXLinux, prompts responses are good but it cannot find info on xsnow. Haha.

"Got my hands on a decent machine and NVIDIA drivers work. Using CUDA - not just 500x faster than CPU but over 900x faster than when I first started running inference. Tested against a laptop I used in 2020."

We think LLMs are mostly hype, based on the hard evidence. It is also quite revealing that "Open" "AI" is running out of money to borrow, based on press reports (in Daily Links already).

If people can run LLMs locally ("offline"), then that is at least a step in the right direction.

We're planning to write many real articles, not machine-spewed words (with plausible grammar). LLMs are not properly replacing jobs; those who had a go at it are reverting back to real staff, full of regret and remorse (they were sold false promises by Microsoft-bribed publishers).

The capabilities and potential of LLMs are massively exaggerated. It's like "Web3" all over again, or even "VR" (or newer buzzwords), which Microsoft divests and runs away from. Losses, losses, and more losses.

I spent my day working on the bikes (today's main activity); "smart" "assistants" and "chatbots" cannot do this, nor can they cook a decent meal. Disregard he vapourware till is cools down and people fall back to Earth. The only revolutionary thing at Microsoft this year was "pump and dump" with buzzwords. While debt soared Microsoft tricked some people into "investing" in a bubble. This would not be possible without docile, bribed media. █