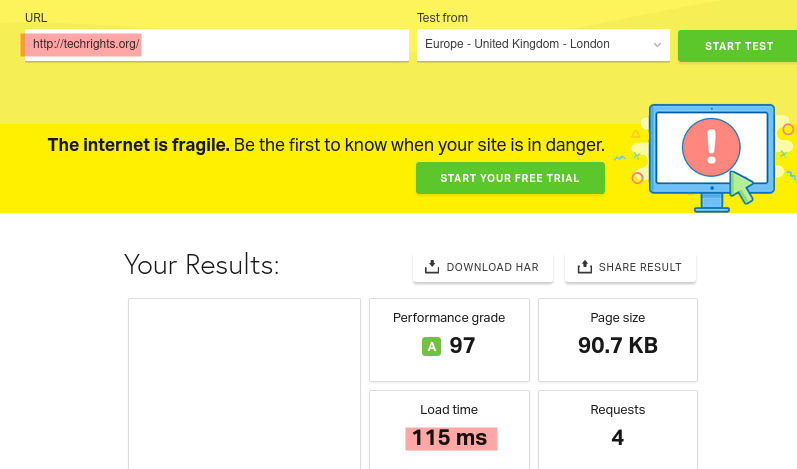

Speed of Sites Matters

Is your Web site slow? If so, what makes it slow? The back-end query/ies? Page size? Rendering time at the user's end? JavaScript?

Page loading times are not typically reducible to network speeds. When a request is made, how many files (and from how many domains) need to be transmitted? How big/long the latency? How far is the nearest server? Once the files are received by the requester, how long will it take to render everything? Need more requests be made to more domains (e.g. for Google fonts)? On a low-RAM, slow-CPU machine, can the entire page be rendered in a hundredth of a second? If not, then why not?

In 2026, despite people's computers being more powerful than ever before (on average at least), it's often too slow or takes too long to load pages. Fastly or Clownflare and all sorts of gimmicks cannot compensate for sites being bloated and poorly put together. A lot of sites lack actual Web pages; they're webapps, i.e. JavaScript programs that spew out HTML to render things. It's akin to a virtual machine running in a canvas that is a browser tab.

11 days from now there will be some work as the host has "scheduled maintenance on connectivity between our Slough and Dublin data centres as part of an ongoing network hardening programme."

Maybe speed can be improved some more.

No downtime should be expected; this weekend we did a bunch of maintenance tasks, so the volume of output (such as article) was lower. This week should be more productive than the last. We also need to do some upgrades.

Maybe we'll see some EPO outcomes this month with the looming strikes. Being easily accessible all the time matters to us. █